Three AI trends from 2025 and three predictions for 2026: focus on speed, web search disruption, AI security threats become real

2025 felt like a continual whirlwind of AI advances. Models got good enough at benchmarks that benchmarks stopped being interesting, and cheaper and smaller models became very capable. Search started reliably answering questions instead of pointing you at a list of links. And a long list of AI security problems that everyone agreed were concerning quietly failed to cause any actual disasters. Which is not a reassuring place to be.

This post is a rough attempt to pin down three things from the last year that may change as we go into next year: why speed may become the real battleground between models, why the economics of web search is starting to look shaky, and why 2026 may be the year AI security issues finally stop being hypothetical.

So below are three observations from 2025, followed by three attempts at predictions for 2026. Let's see in a year which bits age badly!

1. Speed is the next battleground

How have AI models evolved over 2025? Let's compare the moment in January when DeepSeek R1 launched with the latest model Google announced just last week.

DeepSeek R1 had open weights and appeared to have been created at much lower cost than comparable US models (although later insights clarified that some of the initial reporting was overblown). It was the first reasoning model to show it's workings to users (revealing it's "chain of thought" as it was "thinking"), and it certainly caused a stir (it became the most-downloaded iPhone app, took Nvidia's value down by $600B, and raised the stakes on US / China chip export restrictions). It helped spur an even greater sense of competition among the frontier model companies that has continued all year.

Rolling forward to December 2025, a year later, let's see where we are with Google's new Gemini 3 "Flash" model, introduced last week.

Price and performance are the obvious improvements to look at, and we'll come back to those. But we've also known about the importance of speed when creating successful internet services for some time. Back in 2006, Marissa Meyer (then at Google) revealed that a half-second delay in page loading led to a 20% drop in their advertising traffic (and hence revenue). Greg Linden (then at Amazon) showed that a 100ms delay loading a page leads to a 1% drop in revenue (the original slides have disappeared but there's a version of his Make Data Useful talk on Scribd). Indeed the original success of Google as a search engine compared to its competitors at the time wasn't just due to more relevant search results (via the PageRank algorithm); it was also significantly faster than services like Alta Vista. "Speed matters".

Speed is a big focus for Gemini 3 Flash. It's size (number of parameters) doesn't seem to have been revealed. On many benchmarks it is close to state of the art on the price / performance trade-off. And it is super speedy; something we don't yet have widely used benchmarks for. However, here's a challenging example task from Stanley Ulili writing for Better Stack: to "create a fully functional 3D Minecraft clone using Three.js, complete with procedural world generation, player controls, and block interaction, all within a single HTML file". Compared to 5 minutes for Claude Opus, Gemini 3 Flash knocks this out in just over 30 seconds.

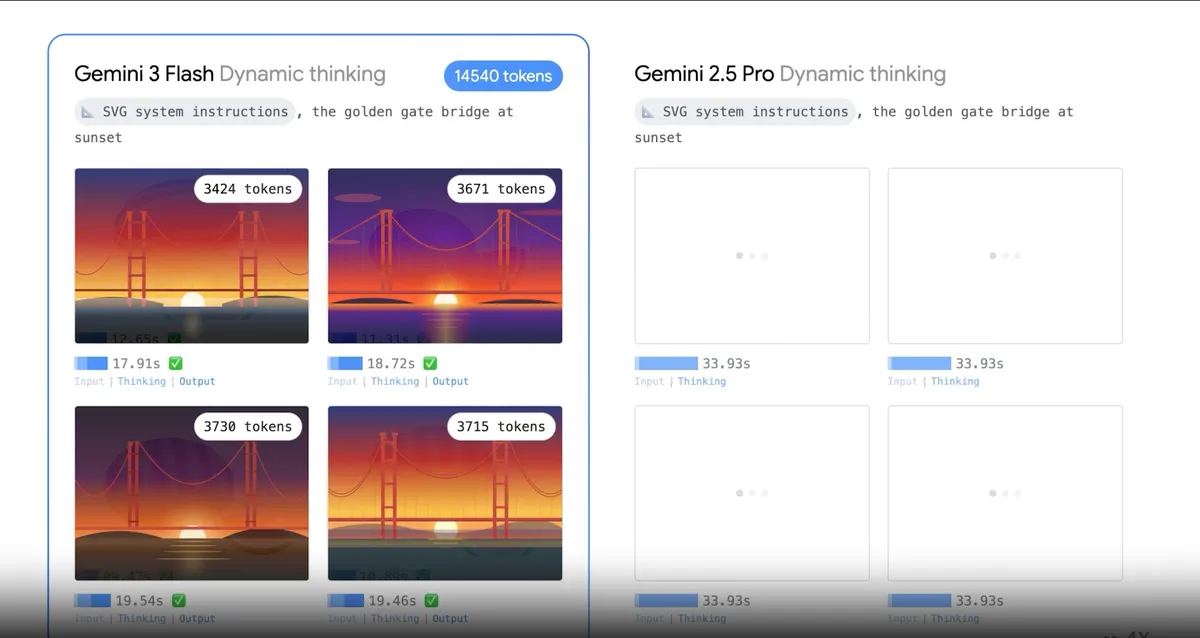

This side-by-side demonstration from Google shows just how much faster and cheaper Gemini 3 Flash is compared to the previous 2.5 Pro (the first task is to generate an illustration in the SVG format). Click to see the video. Gemini 3 Flash is all the way down the street while 2.5 Pro is still lacing its shoes:

So how far have we come since the "DeepSeek moment" in January?

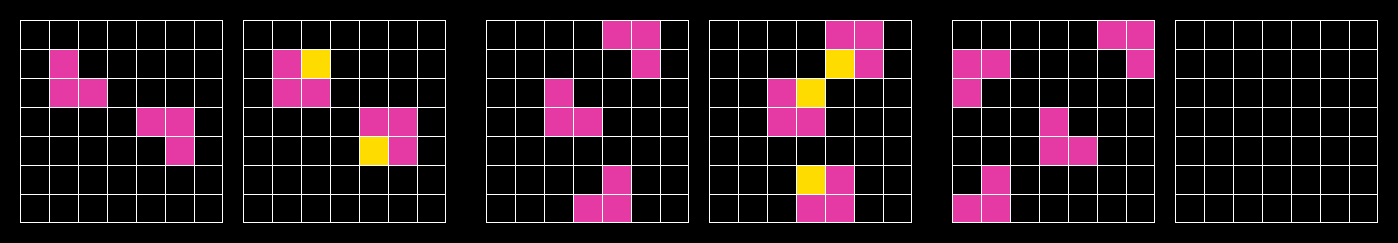

The ARC (Abstraction and Reasoning Corpus) AGI benchmark was introduced in 2019 by François Chollet, originally in his paper On the Measure of Intelligence, to explore whether a system could solve novel reasoning problems from just a handful of examples. It consists of small visual puzzles. You see a number of before / after pairs, and then have to figure out the "after" grid for the final one:

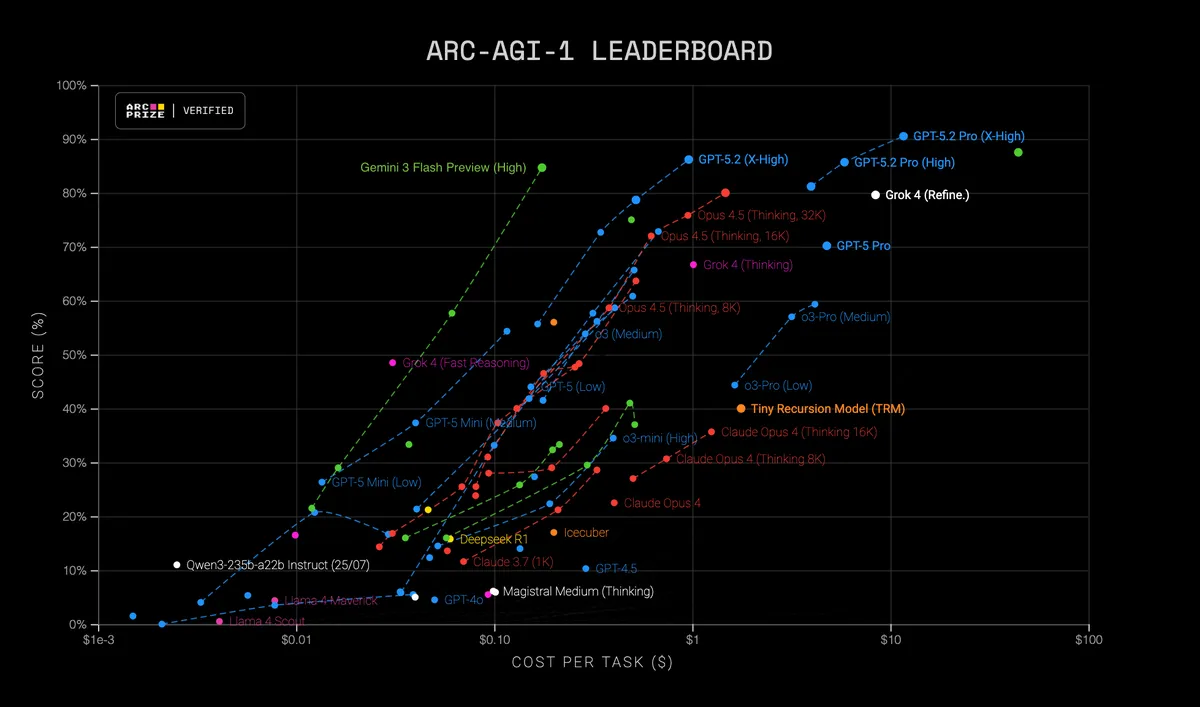

The idea has been continued with the more recent ARC-AGI-2 benchmark. You can see the progress for ARC-AGI-1, with Gemini 3 Flash in green and DeepSeek R1 in yellow. The score goes from 16% task completion at 6 cents a task for DeepSeek to 58% at a similar cost or up to a close-to-state-of-the-art 85% at 17 cents per task for Gemini 3 Flash.

There are many benchmarks to choose from when looking at AI models, but equally most of them will show a similar trend. While bigger, more expensive models continue to push the envelope, cheaper and faster models are now often matching or exceeding the performance of their larger cousins from earlier in the year. We even have a full LLM that can now run inside your browser (Mistral's 3B-parameter model, from earlier this month).

Another good benchmark to look at is SWE-bench. This is a real world software engineering test. Given a codebase and a description of a real issue, the model has to generate a patch to the code that resolves the issue. Here Gemini 3 Flash scores 78% vs. similar scores for top models like GPT 5.2 or Clause Sonnet 4.5, but at 15-30% of the cost. DeepSeek R1 only scored around 50% at the start of the year. A software engineer who can resolve an issue 80% of the time is a very different proposition to one who can do so 50% of the time. And if the tool is faster and more responsive, it will be a more effective coding and thinking partner.

Benchmarks are helpful proxies for real world usefulness, but don't tell the whole story. For many tasks, models are already good enough, and further improvements in benchmarks aren't relevant compared to advances in interaction design or the tuning of systems for particular personalities or use cases. As Tyler Cowen said in September, "the best LLMs now have near-perfect answers for a wide range of queries. Those answers will not be getting much better, though they may be integrated into different services in higher productivity ways."

In summary, across all benchmarks, we've seen a remarkable improvement in performance at a similar cost and higher speed, to the point that further improvements are less relevant for many real world situations. DeepSeek R1 to Gemini 3 Flash represents a big jump forward.

What's going to happen in 2026? My guess: most widely used AI systems will be built on ‘good-enough’ models optimised for latency and cost. The same high performance, but cheaper and faster. As we've seen before with web search and e-commerce, a tool that responds quickly will be used more often and in more situations. Speed matters more now than in early LLM deployments because it changes interaction patterns, not just satisfaction. Once latency drops below a few hundred milliseconds, models stop feeling like tools you query and more like conversation partners. That’s the threshold Gemini 3 Flash might be taking us over.

2. Extinction of search?

Spoiler: it won't be extinct any time soon!

This has been the year that AI chatbot usage has gone mainstream. ChatGPT weekly active users have gone from 300M to 800M over the year. Gemini by the end of October reached 650M monthly active users, up 3x over the previous 3 months. Google's processing 2500% more tokens over a year. All of the business models built upon web search are at risk, both sites that rely on search advertising and those who depend on organic search referrals. As Tim Berners-Lee says:

“If web pages are all read by LLMs, then people ask the LLM for the data and the LLM just produces the result, the whole ad-based business model of the web starts to fall apart,” This system threatens the collapse of the decades-long advertising-based model that has seen the likes of Google and Meta become multitrillion-dollar businesses on the back of powerful ad networks.

Google still has around 90% market share in search. So when it adds AI summaries at the top of results, it has a huge impact. In January in the US, AI overviews featured in only 6% of US searches, and by the end of the year it's looking more like 16%, and higher still in "informational" searches. From Google's Q2 report: "AI Overviews now has over 2 billion monthly users across more than 200 countries and territories and 40 languages.". In the UK, Ofcom says "About 30% of searches now show AI overviews, and more than half (53%) of adults say they see these summaries often". You can see it is hard to get consistent figures, but the growth is clear.

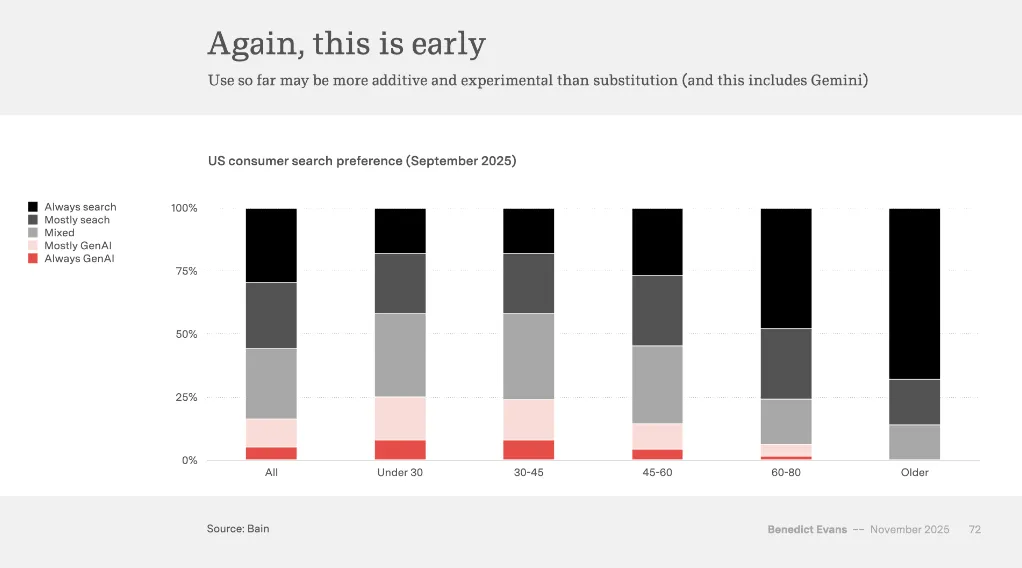

What's the impact? A small Pew Research study in July showed that AI summaries could halve the number of people clicking on search results, and increase the numbers stopping their search after seeing the initial results. Two normally quite sober news sources have used the sensationalist "extinction" language. NPR claimed that AI summaries will be an "extinction-level event" for news publishers (an example is a 30% drop in traffic to CNN). At the smaller end of the scale, the Guardian reports on an "extinction event" for recipe writers, whose visitor numbers are dropping. However, others argue that these claims are overblown. In Benedict Evan's AI Eats the World November presentation, he shows this graph of search preferences in the US, with only a minority switching from search to generative AI so far:

The elephant in the room is: how, when or if OpenAI will introduce its own advertising model (something Ben Thompson of Stratechery thinks is coming: "ChatGPT should obviously have an advertising model"). The rumours are building: references to ad features have been spotted in the Android app recently. Unlike search ads, which slot neatly alongside ranked links, conversational interfaces create a more complex relationship with users, have a much stronger sense of intent and could target and personalise in a more accurate way. Although monetisation may be inevitable, the best mechanisms are unclear.

My 2026 prediction: we'll see an increasing shift to AI summaries and chatbot conversations, and away from searching and clicking on search results, but we'll also see lots of experiments on integration of paid advertising, referrals and commissions into AI results. It may take most of 2026 for viable models to solidify. In some areas, like healthcare advice, the AI interaction is already much better than web search. Microsoft Copilot on mobile already has health as the most common topic for AI chats. This is where we'll see new vertical providers emerge (like more "health AI chat" providers with stronger guardrails and curation, like OpenEvidence).

3. Cybersecurity attacks through AI actually start happening

In last week's post I linked to an excellent article called The Normalization of Deviance in AI from the Wunderwuzzi blog Embrace the Red. As Simon Willison put it:

This thought-provoking essay from Johann Rehberger directly addresses something that I’ve been worrying about for quite a while: in the absence of any headline-grabbing examples of prompt injection vulnerabilities causing real economic harm, is anyone going to care?

Very true. We have had a year where endless vulnerabilities have been uncovered and documented (check #ai-security for all the times I've posted - a good example is Agentic AI’s OODA Loop Problem by security guru Bruce Schneier). However, to my knowledge, there's been no big, publicised attack. And the deployment of systems into corporate environments is happening at breakneck speed with little understanding of the risks.

Imagine an internal AI agent with access to email, documents, and payment systems being quietly prompt-injected via a supplier PDF or sneaky email. That would be enough to fake payment approvals, change invoice amounts or exfiltrate data. The more "agentic" systems are sold to and integrated across business operations systems, the more chance of viable attacks, that could be hard to spot.

However hard it is to protect against threats to a single AI system, it is even harder to understand systems of multiple agents collaborating. A paper from last week on Distributional AGI Safety from Google DeepMind is worth reviewing (thanks to Marginal Revolution for the link). Rather than considering safety for a single AI system, the authors consider "the emergence of AGI via the interaction of sub-AGI Agents within groups or systems" (a "patchwork" AGI). Such as system could emerge slowly, unintentionally, without being easy to spot, and indeed could include humans as well as AI agents across a broader system (at this point, if you haven't read the science fiction novella Manna by Marshall Brain, I'd recommend it!). The paper goes on the look at many ways such a patchwork multi-agent AI system / marketplace could be regulated, controlled and made safer.

I hope this doesn't come true, but I expect in 2026 we'll start to see AI security threats go from hypothetical to real, with consequential data breaches starting to happen. I'd be surprised if we see the emergence of a runaway "patchwork" intelligence composed of many AI agents, but the system failures and complexities of multi-agent collaboration are clearly on the horizon.

For next time...

This is already quite a long post! A couple of huge trends to tackle next time include voice interactions, that have come a long way in 2025, and image and video generation, especially the big step forward with Nano Banana.