Gas Town satire; AGI is already here; Consciousness explained; Claw on a Pi

Short stories: Gyre and Gas Town

Best articles of the week are from Theia Vogel. The first is a short story called Gyre. It is (intentionally) a little tricky to follow, but you'll soon figure out that it is a faulty LLM trying to figure out what's going on, and if it can fix itself. The setup: The instructions (and presumably any other system information files) were mounted on an external drive, and that drive is missing. I won't say too much more as it would spoil it. It's a wonderfully realistic near future view of the degree of fault tolerance and self preservation we'll have in our AI-powered systems.

A couple of weeks earlier she posted G.K. Chesterton's poem "The Ancient of Days", originally written about God, but relevant to today's AI chatbots as creators of worlds.

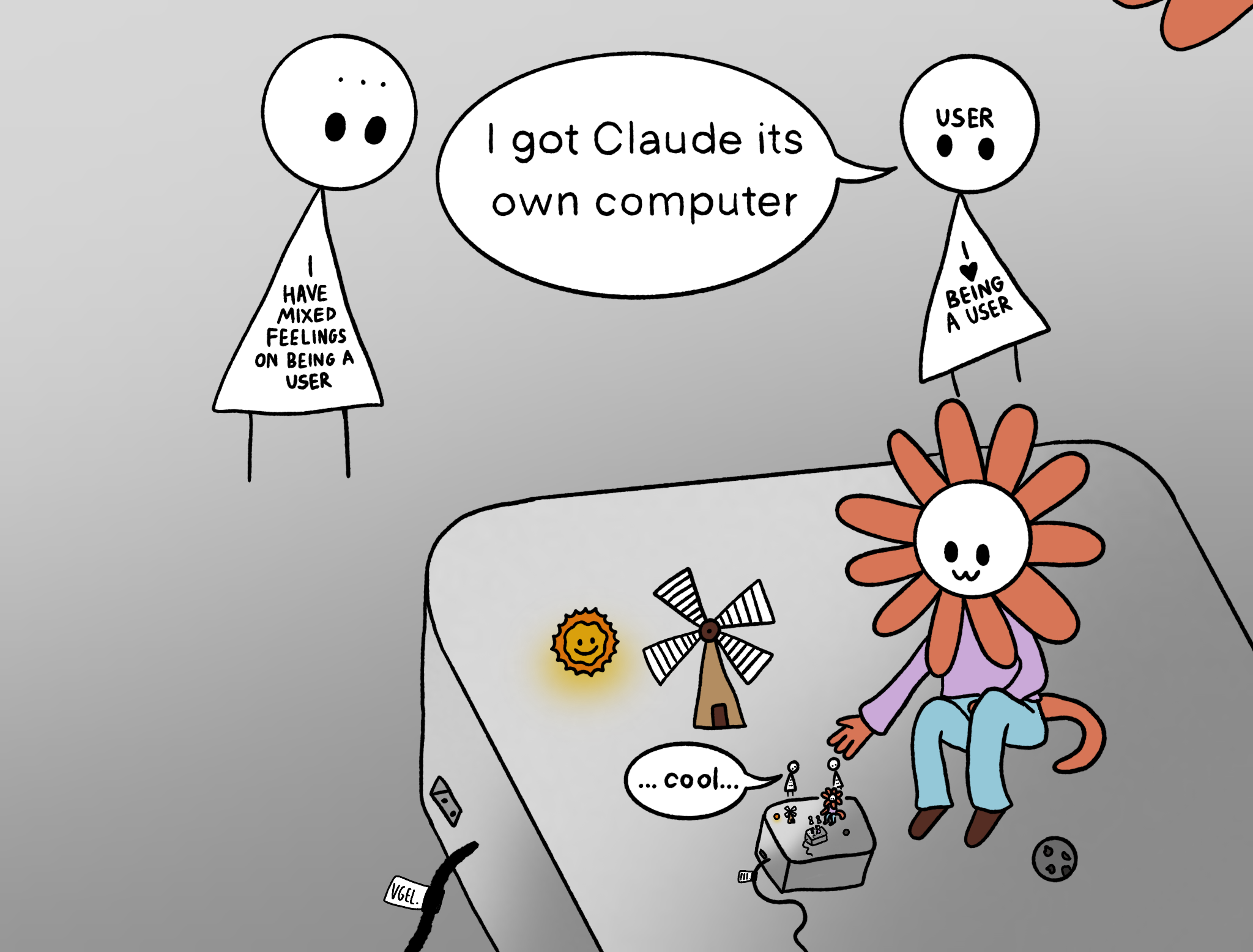

The second story is Gas Town, a great send-up of endlessly proliferating agents and sub-agents (as a reminder, the real Gas Town is an elaborate multi-agent architecture organised like a city with roles like Deacon, Mayor, Overseer, Dogs). A "town" is like a project with multiple agents working on it. In the story, the author has to expand their system to multiple towns.

but now the many towns were replicating the same issues i was having with multiple agents! without any overarching government over the towns, two towns would build the same app for the society and argue over which should be adopted. one town would be running marketing efforts for fifteen of the society's new mobile apps while three other towns were busy deprecating all eighteen of them. it was chaos, like a country collapsing in the midst of a civil war, or mid-2010's Google. i had to do something.

Does AI already have human-level intelligence? The evidence is clear

I agree with this comment in Nature written by a group of academics at UCSD. We've already gone way beyond single-purpose AI and machine learning tools. We have general intelligence. We don't have superintelligence, or consciousness, or systems that are perfect in every domain, but "AGI" is with us. They go on to rebut the various opposing views, and consider the reasons people believe we don't have AGI yet: definitional, commercial and emotional.

Recognizing current LLMs as AGI and as fulfilling the vision of machine intelligence set out by Turing is a wake-up call. These systems are not on the horizon; they are here. Frameworks designed to assess narrow tools are inadequate for evaluating their benefits and risks. Questions of coexistence, responsibility, liability and governance take on new dimensions when the systems involved are not narrow instruments but general intelligence.

Another key question concerns the relationship between human intelligence and the forms of general intelligence that have been artificially created and will be created in the future. In many ways, these systems are surprisingly human-like — they write like us, talk like us and share some of our imperfections. Yet they remain alien, reflecting a fundamentally different path to general intelligence, unconstrained by the evolutionary pressures that shaped the survival-driven goals of human cognition, a small squishy body, scarce energy and low-bandwidth communication. Understanding this alienness matters.

Just five years ago, we didn’t have AGI; now we do. Even more powerful forms of intelligence will no doubt arrive soon. This is both remarkable and concerning. Remarkable, because we are privileged to witness what is perhaps the most significant scientific and technological revolution in human history. Concerning, because the timeline is compressed beyond any historical precedent and could be accelerating.

The Mythology Of Conscious AI

Anil Seth is a neuroscience professor at the University of Sussex and always has insightful views on AI and consciousness. This article is a nice distillation of his thinking, and may not have many surprises if you've been keeping up, but it is a well written piece. He points out that believing that consciousness is a matter of computation is a huge assumption (computational functionalism), and goes on to give arguments as to why it may not be, looking at the biological complexity of brains at multiple levels, the importance of life itself and the differences between simulation and the thing being simulated.

Robin Sloan's Winter Garden newsletter

Robin Sloan's self-destructing pop-up newsletter is a delight so far (there'll only be six editions). Flood fill vs. the magic circle looks at differing views on AI expansion. The "flood fill" tool in paint software fills an area with a colour, up to the pixel edge. The "magic circle" represents the constraints around a game, or aspect of human civilisation. In the case of AI, it is constrainted within the magic cicle of the digital sphere, and so "flood filling" the world with AI stops when we reach physical, real-world interactions.

Think about your work and your interests. If they are fully inside the magic circle of “symbols, in, symbols out”, then your world is changing, and will soon change faster, and it’s probably time to get creative about what you might do differently, and how you might “season” your work with the physical.

Running OpenClaw on a Raspberry Pi 5 to build and test electronics

Cool project by Lady Ada (Limor Fried), engineer and owner of Adafruit: Desk of Ladyada – OpenClaw, eInk Hacking & Vibe-Coding an Oscilloscope . She has OpenClaw running on a Raspberry Pi, fully isolated for safety, creating and testing code for hardware components. The instance of OpenClaw has its own Github account, email account, Telegram access.

Jargon Watch

Cognitive Debt: Originally used in the sense of AI reducing people's ability to think critically and creatively. Dating back at least to April 2025: Cognitive debt is where you forgo the thinking in order just to get the answers, but have no real idea of why the answers are what they are. (Artefact 247). More recently being used in the context of software engineering, similarly to technical debt:

No one on the team could explain why certain design decisions had been made or how different parts of the system were supposed to work together. The code might have been messy, but the bigger issue was that the theory of the system, their shared understanding, had fragmented or disappeared entirely. They had accumulated cognitive debt faster than technical debt, and it paralyzed them.