Clinical AI exits the UK and EU; Elephants and goldfish; Hassabis at YC; The agent HR problem; Sci-fi for foresight

The elephant walks out: Open Evidence and the EU & UK clinical AI exit

Open Evidence is a clinical-AI search tool used by 40% of US doctors across 10,000 hospitals, running 18 million clinical consultations a month, and recently embedded in the Epic electronic health record at the Mount Sinai hospital. They've recently decided to block the UK and EU, citing "regulatory uncertainty" around the EU's AI Act. The EU's Medical Device Regulation (MDR) has governed clinical software since 2021. If a piece of software helps a doctor reach a diagnostic or treatment decision, it is a regulated medical device and needs a CE mark before it can be sold, signed off by an independent assessor who has reviewed the clinical evidence, the safety case and the post-market plan. Calling the tool a "search engine" or a "chatbot" does not change the test. The EU AI Act, phasing in over the past year, sits on top of the medical device rules rather than replacing them, and adds its own criteria plus fines that go up to 7% of global turnover.

The relevant deadline may be EUDAMED, the EU's central database of medical devices, becoming mandatory at the end of May. To appear in it you have to log a CE mark; without one you can't register, and not registering is itself an enforcement trigger.

Plaza Trillo, a healthtech regulatory consultant, on the Open Evidence response to "geofence" the UK and EU:

The AI Act didn't change the analysis. It changed the legal exposure. A second regulation got bolted on top of an MDR obligation the vendors had already chosen to skip. Two regulations are harder to ignore than one. A geofence is the cheapest way to make both go away.

Naming the AI Act lets the vendor position the geofence as a response to a new and uncertain regulation. Naming the MDR would require admitting the product had been operating in a grey zone the whole time. One framing is sympathetic. The other is a confession.

In the UK, Open Evidence was never procured by NHS trusts through the usual route; what grew instead was "shadow AI" usage by individual clinicians using personal accounts. As reported by Distilled Post:

The practical consequences for UK clinicians are direct. Doctors who would have had access to a platform handling millions of evidence-based clinical queries monthly will instead rely on the tools currently available within the NHS digital ecosystem, which do not replicate that functionality at equivalent scale or sophistication. Health systems that had incorporated OpenEvidence into planned digital transformation programmes will need to revise those plans, either identifying alternative platforms or deferring the relevant workstreams.

There does appear to be a route: in 2024 the UK medicines regulator the MHRA opened the AI Airlock, a regulatory sandbox for trialling AI medical devices in real clinical settings under MHRA supervision before full market roll-out. Open Evidence chose to bypass the Airlock and block the UK instead. I wonder how many other services may take this approach. The same problems may extend to Google's AI Overviews when they answer a clinical query, and to ChatGPT, Gemini and Claude when used the same way. OpenAI's ChatGPT Health, launched in January, is explicitly not available in the EEA, Switzerland or the UK. Anthropic's Claude for Healthcare, launched four days later, is US-only too.

Elephants and goldfish

Dave Rensin, a Distinguished Engineer at Google, writes about what AI changes in how engineering teams handle context. He introduces two characters: The Elephant is the heavily-prompted, context-rich AI session, and the design document it helps you produce ("An elephant never forgets"). The Goldfish is a brand new session with zero memory, used for testing: It only knows exactly what is put right in front of it. The model is feed-the-Elephant, test-against-the-Goldfish: grow the design document through dialogue with the Elephant, then hand it cold to a fresh Goldfish to verify whether the document carries enough context for an unprimed model to implement correctly. Rensin's slogan: "Design is the new code."

Demis Hassabis at Y Combinator: continual learning, the missing hit game, and the Einstein test

It's always helpful to hear Demis Hassabis interviews. This one is with Garry Tan at Y Combinator. I captured three points. The first is continual learning, which I wrote about last July:

Not having continual learning currently is one of the things holding back agents from doing full tasks. They don't adapt well with the context that you're going to put them in.

The second is a useful hype test. Hassabis thinks he can prototype Theme Park, the 1994 hit video game he worked on, in half an hour now. So the obvious question is:

Why haven't we seen a kid making a hit game that sells 10 million copies?

The third is his bar for genuine AI scientific creativity, which he calls the Einstein test: train a system on the world's knowledge as of 1901, and then see if it comes up with special relativity by 1905. AlphaGo's move 37 was creative within Go, but no current system can invent Go from a high-level brief. He thinks it is coming within a couple of years, assuming there's just a missing analogical-reasoning capability from today's systems (that's quite a big "just"!).

OpenAI on Bedrock, and the agent HR problem

I liked this Stratechery interview with Sam Altman and AWS CEO Matt Garman where they discussed Microsoft and OpenAI amending their cloud agreement, with OpenAI now free to ship products on other clouds including AWS. Ben Thompson's view is that Azure exclusivity was "actively damaging Microsoft's investment in OpenAI" because enterprises wanted access to OpenAI inside the cloud they already used, and the consequence was rapid Anthropic growth on AWS. So as a result we now have Bedrock Managed Agents, powered by OpenAI: "The easiest way to think about this offering is Codex in AWS".

One of Garman's concerns:

I love the promise of what I can do with some of these really powerful models and agents, how do I make sure that I don't have a company-ending event where I screw it up?

Tyler Akidau, CTO at Redpanda and co-creator of Apache Beam at Google, argues on O'Reilly Radar that the perimeter most enterprises do have is the wrong shape:

The agents aren't the problem. The problem is the missing infrastructure between agents and your data.

We built sophisticated agents but not the systems to manage them (there's no HR department). Agents differ from humans and from traditional software in two ways human-era governance does not handle: they fail in ways indistinguishable from accurate output, and they execute bad plans at machine scale without pushback. His idea:

Governance must be enforced via channels that agents cannot access, modify, or circumvent.

He calls this out-of-band governance: in-band rules like system prompts and training fail because agents hallucinate around them.

There's a similar view from Sam Altman in the first interview:

If you're an employee at a company, do you want to have one account for when you use some service, and then should your agent just use your account, or should your agent use a different account so that the server can tell which is which? … What we actually want is something we haven't figured out yet, and maybe it's that when Ben's agent is logging in as Ben, it uses Ben's account but it notes that it's an agent and not the real Ben. We don't even have a primitive to think about that.

Extrapolated Futures Archive

Patrick Tanguay's Sentiers No.400 recommended the Extrapolated Futures Archive, a reverse-lookup database for speculative fiction. Describe a situation you are facing, and find the science fiction stories that have already worked through its implications. Given that it is designed to be used by AI systems as well as humans, I thought it would be fun to ask Claude to give it a spin.

I tried it with a scenario from Sam Altman in the Stratechery interview above: an AI agent acts on behalf of a human using their account, and there is no easy way to distinguish whose decision was whose. The archive matches this to the Cover Identity Becomes Real Identity idea: a spy whose cover persona caused real harm must face trial for crimes committed in character, raising the question of whether actions taken under a false identity are the moral responsibility of the actor or the identity. Here's some of the matching stories:

- Mother Night (Kurt Vonnegut, 1961). An American playwright living in Germany broadcasts Nazi propaganda as cover for US intelligence. After the war he is tried for war crimes, the Nazis having celebrated his broadcasts as the work of a true believer. Vonnegut's preface gives the moral: "We are what we pretend to be, so we must be careful about what we pretend to be."

- A Scanner Darkly (Philip K. Dick, 1977). An undercover narcotics agent is assigned to surveil himself; as the drug Substance D degrades the link between his two identities he becomes unable to tell which one is doing what.

- Double Star (Robert A. Heinlein, 1956). An out-of-work actor is hired to impersonate a kidnapped politician for a single ceremonial appearance, then keeps having to extend the role, and ends up living the rest of the politician's career as the politician.

Given how much AI development seems to be driven by science fiction the founders have read, this could be a good reference for foresight work.

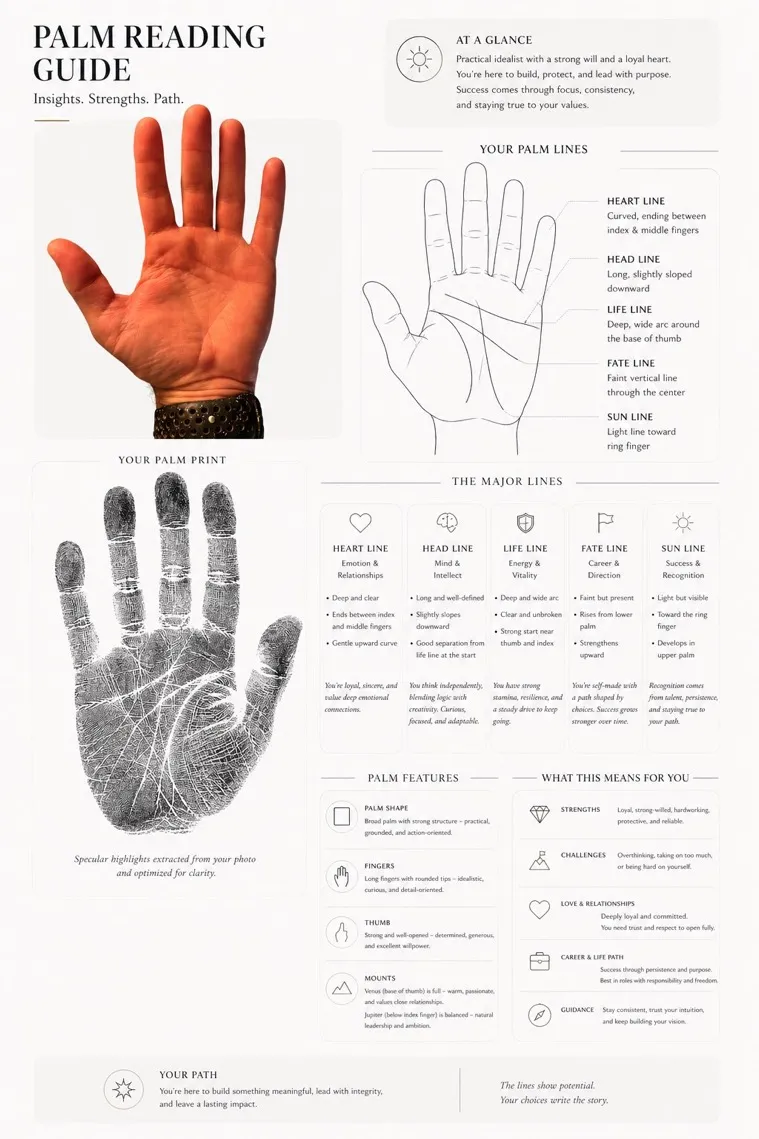

Palm reading by GPT Image-2

Let's finish with a fun one. Linus Ekenstam fed a photo of his open palm to GPT Image-2 and asked:

Based on my hand I want you to make a complete palm reading guide. Analyze the palm. The style of the guide should be clean and minimal, thin lines, rounded cards, overall very expensive looking. Focus on the palm reading, create a simple black on white contour of my main lines, as a little artwork. Do your best.

Related posts

- Claude Code for everything; OpenAI for health and clinical regulatory concerns; Decentralised training growth; Scaling evaluations; Software libraries without code

- The agentic engineering Cambrian explosion; The radiologist who never saw an X-ray; Ten metaphors for AI

- Reverse centaurs vs. AI; Delaying AI regulation; Chatbots choosing similar friends; Normalising deviance