Claude Code for everything; OpenAI for health and clinical regulatory concerns; Decentralised training growth; Scaling evaluations; Software libraries without code.

Welcome to 2026! We skipped a week as the last update was 27 Dec, so a few things to catch up on.

Claude Code "for everything": a general purpose tool beyond software development

A flurry of articles at the start of the year on roughly the same topic: you can learn to use the power of Claude Code, as a good agent framework, with access to your personal content, for all kinds of personal productivity. Although it was created as a tool for software developers, it is more widely applicable.

The Personal Panopticon A manifesto from Molly Cantillon, founder of NOX (AI personal assistance / productivity) and also part of the group who created Jmail (a way to see Epstein's emails via a Gmail-like interface). The start captures this topic perfectly: A few months ago, I started running my life out of Claude Code. The article is very explicit about the privacy trade-offs involved in allowing Anthropic access to the most personal information. Her claim is A panopticon still, but the tower belongs to you. Thanks to Marginal Revolution for this link.

Claude Code for Everything: Finally, that Personal Assistant You’ve Always Wanted - Product manager Hannah Stulberg starts a series. She also recommends Carl Vellotti’s free course Claude Code for Everyone (taught within Claude Code itself).

Claude Code and What Comes Next Ethan Mollick goes over some of the reasons Claude Code works well: the sub-agents, the ability to compact the context as it grows, skills (highlighted in the 19 Oct WhizzyIdeas update). He also points to the newer Claude desktop app that provides a simpler entry point (as does Simon Willison: The Claude Desktop macOS app has a "Local" mode for Claude Code).

OpenAI for Healthcare

OpenAI announced siginficant new health products for both clinicans and consumers last week.

ChatGPT Health is a separated experience within ChatGPT that is tuned for medical advice conversations, and can natively import or connect with health data (like Apple Health) and (in the US at least) medical records. We know that health conversations are one of the biggest uses of AI chat. There's huge value in being more educated and informed, better understanding test results, symptoms and diseases, treatment possibilities. It should lead to much more productive conversations when you see your doctor. That's very much the angle OpenAI are emphasising: "it's really important for me to understand my CAT scan results before I talk to my doctor". They're also right on the edge of medical device regulation. For instance, a symptom checker that lists medical conditions that match a user's symptoms could be a medical device. As another example, Google's AI summaries at the top of search results can also offer medical device, that can easily be incorrect. The Guardian has found cases of dangerous advice (for instance, giving the opposite dietary advice for people with pancreatic cancer, or incorrect interpretations of liver function tests). Although Google seems to have now supressed AI summaries on those specific searches, it is certain to be a widespread issue. It is hard to see how AI chat or summary text won't be interpreted as having a medical purpose that normally falls within medical device regulations. Regulatory concerns may be why you cannot yet apply to join the waiting list for ChatGPT Health from the EU or UK.

The other half of the announcement was ChatGPT for Healthcare (not sure if I have the naming correct as that is almost the same name! But then OpenAI is notoriously poor at naming things).

This appears to be a healthcare-centric version of ChatGPT Enterprise, with associated security and privacy controls, integration with hospital systems, and models tuned for health information and workflows.

Over the past two years, we’ve partnered with a global network of more than 260 licensed physicians across 60 countries of practice to evaluate model performance using real clinical scenarios. To date, this group has reviewed more than 600,000 model outputs spanning 30 areas of focus. Their continuous feedback has directly informed model training, safety mitigations, and product iteration. ChatGPT for Healthcare went through multiple rounds of physician-led red teaming to tune model behavior, trustworthy information retrieval, and other evaluations.

It isn't clear whether or when this will be available outside the US.

Three interesting points from Jack Clark

From the latest Import AI newsletter #439:

- The biggest decentralised training runs growing their compute by 20x per year vs. frontier labs at 5x. Although they may never catch up, they add to the democracy at the frontier:

Fundamentally, decentralized training is a political technology that will alter the politics of compute at the frontier. Today, the frontier of AI is determined by basically 5 companies, maybe 10 in coming years, which can throw enough compute to train a competitive model in any given 6 month period. These companies are all American today and, with the recent relaxation of export controls on Chinese companies, may also be Chinese in the future. But there aren’t any frontier training runs happening from academic, government, independent, or non-tech-industry actors. Decentralized training gives a way for these and other interest groups to pool their compute to change this dynamic.

How well can a frontier AI model fine-tune an open weights model, and to the tasks a human AI researcher does today? Looks like it can make a 20% improvement compared to 60% for a human. I’d expect we’ll see a system come along and beat the human baseline here by September 2026. Self-improving AI here we come.

Universally Converging Representations of Matter Across Scientific Foundation Models. Research that points towards: foundation models learn a common underlying representation of physical reality.

The Compute Theory of Everything, grading the homework of a minor deity, and the acoustic preferences of Atlantic salmon

These are Samuel Albanie's reflections on 2025. He leads on creating evals for Google DeepMind ("evals" are ways to assess an AI model's capabilities, used throughout the training and development process). It's a lovely piece of writing: funny, thought provoking, sincere. He spends some of it going back to a 1976 Hans Moravec paper that had one of the earliest hypotheses that AI required much more compute power. The evolutionary point is that intelligence is not a fragile accident of primate biology. It is a recurring architectural pattern the universe stumbles upon whenever it leaves a pile of neurons unattended. Moravec said:

The performance of AI machines tends to improve at the same pace that AI researchers get access to faster hardware.

Meaning we're in for significant advances in 2026. This post is well worth your time.

New products and quick links

- Tiiny AI Pocket Lab - mini PC that can run 100B+ models locally, with no high-end GPUs.

- Gemini on Google TV brings video generation, photo editing to the TV interface

- A Software Library with No Code. Very clever idea. Just the prompts and evals, and you can generate the code for this calendar library yourself in whichever language or framework you like. The post has some good discussion on pros and cons, but as a thought experiment it is genius. Via Simon Willison

- Arnaud Benard's site auto-generates daily using Gemini

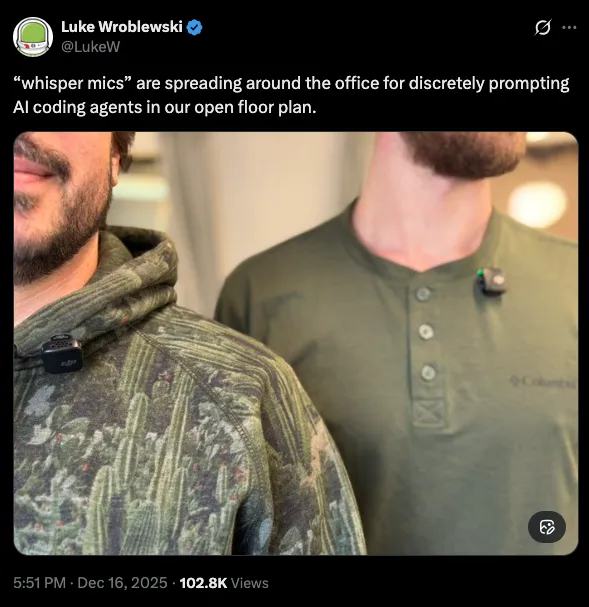

- Lapel-mounted mini "whisper" microphones with noise cancelling for talking to your AI in an open plan office