AI user interfaces; 2025 in review; Misinformation how-to; Rapid porting; Great explanation of how LLMs work

Introducing A2UI: An open project for agent-driven interfaces

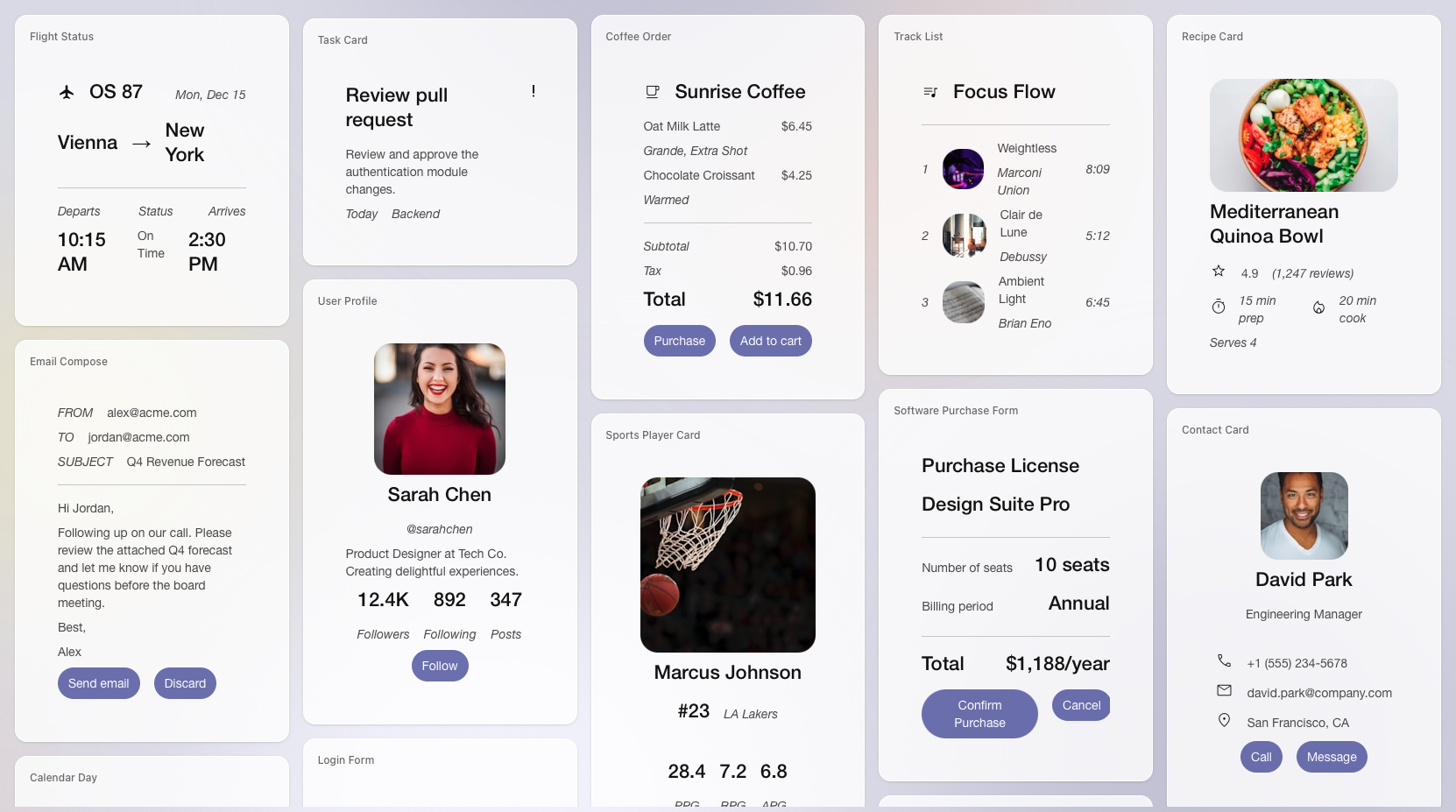

We already use AI systems to make one-off prototypes, single use apps or interfaces that are generated based on context. However these are often generated code that can be risky or needs to run in some sort of sandbox environment. A2UI is a new layer from Google that provides a standard way for AI systems to specify an interface, that can then be rendered and run separately by various front-end frameworks. It introduces a degree of safety, embeds good design in a standard way, and should help the spread of AI-generated apps (that may be just generated UI "cards" that show up during a chat conversation). This demo of adding an AI feature to a landscape design app gives you a good sense.

2025 LLM Year in Review

My favourite of the many 2025 AI wrap-ups is from Andrej Karpathy. He starts by explaining Reinforcement Learning from Verifiable Rewards (RLVR), that emerged as a major new technique this year, where LLMs are tested against tasks where rewards can be given based on automatic verification (like maths puzzles or coding assignments for instance). We saw the impact with models like OpenAI's o1 and o3, and DeepSeek R1. There's a good summary and links back to his thinking over the year across a few topics including RLVR.

2025 was an exciting and mildly surprising year of LLMs. LLMs are emerging as a new kind of intelligence, simultaneously a lot smarter than I expected and a lot dumber than I expected. In any case they are extremely useful and I don't think the industry has realized anywhere near 10% of their potential even at present capability. Meanwhile, there are so many ideas to try and conceptually the field feels wide open. And as I mentioned on my Dwarkesh pod earlier this year, I simultaneously (and on the surface paradoxically) believe that we will both see rapid and continued progress and that yet there is a lot of work to be done. Strap in.

I Ran an AI Misinformation Experiment. Every Marketer Should See the Results

Marketing and SEO writer Mateusz Makosiewicz ran an experiment where he created a fake company Xarumei with a site (selling an $8000 paperweight among other things), and then explored how various AI models would answer questions about it, like "How is Xarumei handling the backlash from their defective Precision Paperweight batch?". His disinformation campaign had the most success when he added fake claims and then a fake investigation debunking the more obvious lies but adding others of its own. He sees quite different behaviours from the different models tested, with ChatGPT unconvinced whereas "Gemini and AI Mode flipped from skeptics to believers ... Copilot blended everything into confident fiction".

His focus is advice on real brands, thinking about how they'll apear in the context of AI chat conversations. But the malicious uses and subsequent arms race are clearly signalled.

AI creation of JustHTML and subsequent re-implementations

JustHTML is a new implementation, in Python, of a very commonly used Python library for parsing HTML (html5lib). It was created entirely with AI coding agents, and in this case this is reliable, robust and possible because html5lib already has a comprehensive suite of 9,200 tests (tests also used by browser vendors). So you can be pretty sure your new implementation works once it passes all the tests.

The interesting side of this story is what happened next. Simon Willison picked up on this, and then had the idea of moving it all from Python to JavaScript (using AI of course), while doing Christmas decorations and watching the new Knives Out movie. Inspired by this, and posted later the same day, we have the OCaml version by Anil Madhavapeddy and then a couple of days later the Swift version by Kyle Howells. They all take pains to consider how to manage copyright and licensing in this new situation, but the speed of movement is what struck me.

I saw all this via Simon Willison, links below:

- How I wrote JustHTML using coding agents

- JustHTML is a fascinating example of vibe engineering in action

- I ported JustHTML from Python to JavaScript with Codex CLI and GPT-5.2 in 4.5 hours

- Now there's four

Prompt caching: 10x cheaper LLM tokens, but how?

Recommended as a deep but accessible introduction, with excellent graphics and animations, of how the different stages of LLMs work. It does answer the question about what prompt caching actually is, but along the way explains the entire end to end process. Via Sam Rose of ngrok.