75% of Google's code is AI-generated; Verifiable inference at Tinfoil; xAI vs Colorado; Vibe-living

75% AI-generated code at Google, and the missing immune system

According to Sundar Pichai "75% of all new code at Google is now AI-generated and approved by engineers, up from 50% last fall." The macOS Gemini app went from idea to a native Swift prototype "in a few days" using Antigravity (Google's agentic development platform). This matches the trends I've been documenting but is still a terrifyingly fast change.

Worth reading alongside is a wonderfully funny satirical post by Peter Girnus, writing in the voice of a fictional Amazon AI-governance manager (Girnus is in fact a security researcher at Trend Micro). The organisation's immune system was the fact that it took a lot of time and effort to build software. AI has removed it. "Amazon built an AI system to find all its other AI systems. It found 247. It did not find itself." It's a good view of the feral software argument from last week, and indeed the governance layer becomes part of the proliferation problem rather than a defence against it.

"Verifiable inference" at Tinfoil

Tinfoil is an interesting startup (out of Y Combinator's spring 2025 batch). They're working on private AI inference, where neither Tinfoil, the cloud provider, nor any operator can see prompts, completions or model state. They run open-weights models (like Llama or DeepSeek) inside hardware-backed Trusted Execution Environments (sealed-off areas inside a GPU where code and data are isolated from operating system, the cloud operator, and anyone else with access to the machine). They don't host closed models like GPT-5 or Claude. The bit I find most interesting is the verifiable inference: cryptographic proof of which model and which weights actually ran on your data. The chip signs the model and weights using a hardware key, and your client checks the signature before trusting any output.

LLM pluralism, and the demand for justification

Two pieces I read together this week, both arguing against an AI monoculture.

Rachel Coldicutt is a UK technologist who, since early 2025, has been building the Society for Hopeful Technologists, a network for UK tech workers who want a progressive, independent alternative to the dominant industry voices. Her latest newsletter argues for AI development that embraces plural knowledge systems rather than the monopolistic, extractive default. The piece grew out of a workshop she co-hosted with the Global Center on AI Governance in Nairobi, looking at how AI is being used in Kenya for biodiversity protection, agriculture and other environmental work. Much of the indigenous knowledge that work depends on is, in her words, data that "may never be computer readable, never be accessible in the open, and never be replicable at scale". That is exactly the kind of knowledge that gets left out when an LLM is trained on whatever can be scraped from the public web. She is also worried about cognitive offloading and deskilling, and pushes for "technology that works with people and communities, rather than technology that erases them".

It is Coldicutt who pointed me, via Bluesky, at the FT this week from Martin Sandbu. His piece is framed around xAI's lawsuit against the state of Colorado. Colorado, alongside Texas, Illinois and California, is part of a wave of US states that have passed substantive AI laws over the past two years. Colorado's AI Act takes effect in June and applies a duty of reasonable care to "high-risk" AI systems used in housing, finance, employment, education, health and government services. In contrast, the Trump White House issued an executive order in December setting up an AI Litigation Task Force to challenge those state laws, followed by recommending federal rules that would override and replace the state ones. xAI wants to strike the Colorado law down, arguing that being told to filter Grok's outputs around the state's view of fairness amounts to compelled speech, a First Amendment violation. On 24 April the federal government intervened on xAI's side, the first time it has joined a challenge to a state AI law.

Sandbu sides with Colorado on the legal question (xAI "clearly protests too much") but reaches past the legal arguments to ask what it means to be a democracy when decisions in housing, healthcare and finance can be delegated to a model. "What the Colorado law does is to sweep AI-powered decision-making inside a fundamental demand for justification," he writes. Drawing on the German philosopher Jürgen Habermas and the American political philosopher John Rawls, he argues that giving reasons is fundamental to both the rule of law and ordinary respect between people. Even if an LLM produces a reasoning trace, that is not the same thing:

When we admit that we owe each other justifications, we recognise each other as equals, with all the moral commitment to reciprocity that this entails. That is a commitment which, so far, only humans can make.

Coldicutt's own thread on Sandbu's piece extends the argument. Humans can give reasons, she writes, because "in some cases humans share a social contract & set of goals, which creates the basis for understanding others' decisions. But an LLM is a random number machine designed to be plausible. Any resonance is an accident of plausibility engineering not an indicator of actual intelligence."

AI health advice: 95% to 35%

I'd missed this BBC piece by James Gallagher when it came out. The key finding from a study by the Oxford Internet Institute is about how ordinary people actually share information: gradually, with omissions and distractions, not as a complete clinical scenario. Chatbots reach 95% accuracy when fed the full scenario in one go, but accuracy collapses to 35% once 1,300 ordinary people have to describe their own symptoms in their own words. Professor Adam Mahdi puts it plainly: "When people talk, they share information gradually, they leave things out and they get distracted." The full paper, Reliability of LLMs as medical assistants for the general public, was published in Nature Medicine.

The 2026 AI Index in charts

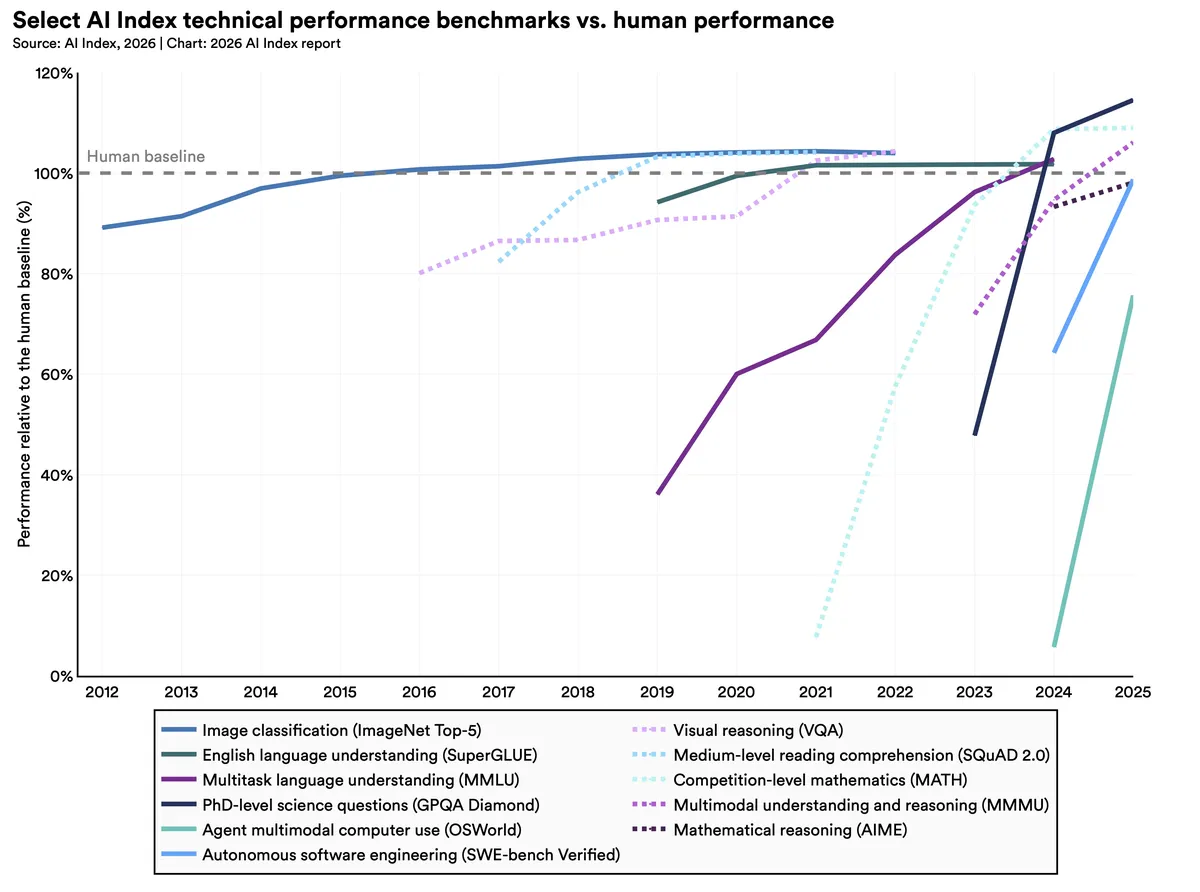

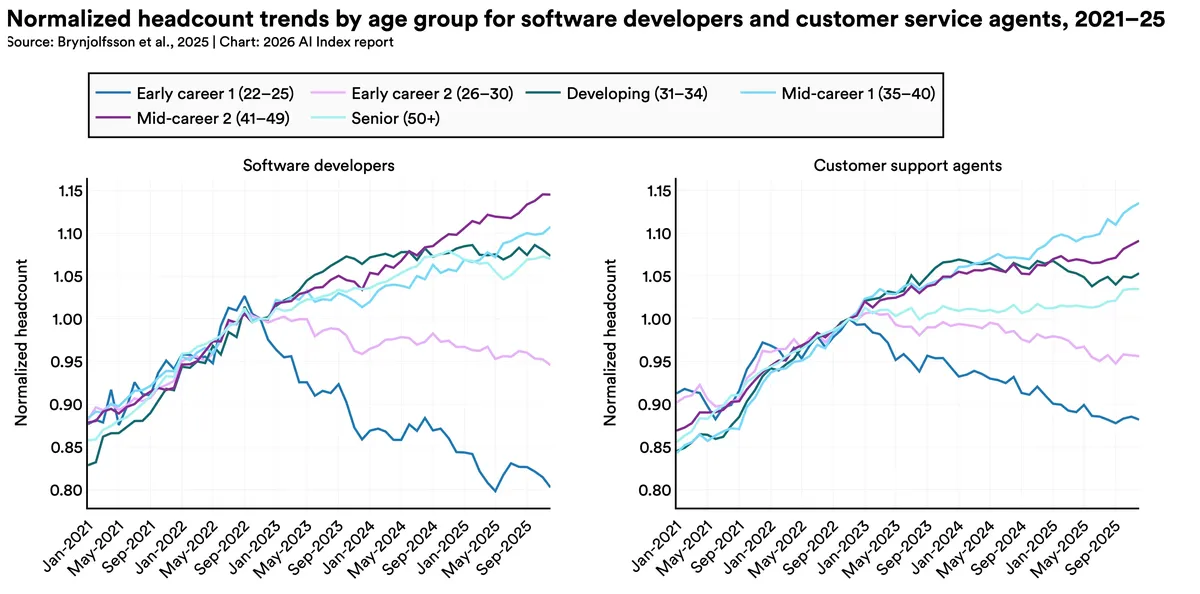

Two charts from Stanford's 2026 AI Index stood out for me, summarised by MIT Technology Review.

The first plots AI performance against human baseline across selected benchmarks over time. Visual reasoning, reading comprehension and multimodal understanding had already crossed the human line by 2025; autonomous software engineering, mathematical reasoning and agent computer use are heading there through 2026. You'll notice the recent benchmark curves are insanely steep.

The second tracks software-developer employment by age cohort. From a peak in September 2022, the 22-to-25 group is down nearly 20%, while older cohorts have continued to grow. Author Michelle Kim: "AI is sprinting, and the rest of us are trying to find our shoes."

NURPLE, and Don't Use A.I. to Do This

I'll close with two pieces that made me laugh.

Travis Kirk Lowry on LinkedIn on what it is like to build with AI right now, and how impossible it is to keep up. "None of this was needed at all if you just waited a couple weeks." Link via Roger Rohrbach.

And more substantial one from the novelist Colson Whitehead in the New York Times, Don't Use AI to Do This, in advance of his next novel Cool Machine. Whitehead claims to love AI and use it for everything, including a fictional digital agent he calls "the Gooch":

My uncle uses A.I. to buy onions. It used to be you wanted onions, you went to the store and maybe it was full of people, or it was empty — you literally never knew. Now A.I. can calculate when the grocery store is low-traffic, and my uncle just strolls in, la-di-da, and buys onions, no waiting. Imagine a world where you don’t have to wait to buy onions. It’s here.

His position is the one in the headline: AI is fine for everything except art. He skewers brainstorming, dismisses the research-assistant rationale ("I just use it for research. It only gets things wrong or hallucinates crazy stuff 30 percent of the time. I don't need a research assistant that gets things wrong 30 percent of the time. I can do that myself."), and closes on the discipline:

Read the book, not the summary. Write the piece, not the prompt. Suffer like the artist you are. It ain't easy, but if it were easy, it wouldn't be worth doing.

Jargon Watch

Vibe-living: the state of using AI to mediate everyday tasks, from inventory to to-do lists to the location of one's own butt. Coined by Colson Whitehead in the NYT essay above ("Everyone Is Using A.I. for Everything nowadays, a.k.a. vibe-living"), and following on naturally from vibe-coding.

Related posts:

- Agentic engineering Cambrian explosion; the radiologist who never saw an X-ray; ten metaphors for AI

- AI security trilemma; AI security compared to autoimmune disorders; autonomous AI malware; can AI be funny; really simple licensing

- Reverse centaurs vs AI; delaying AI regulation; chatbots choosing similar friends; normalising deviance

- Vibe code as tech debt; dancing robots; detecting and changing AI personalities; vehicles and creative AI update